What's Happening?

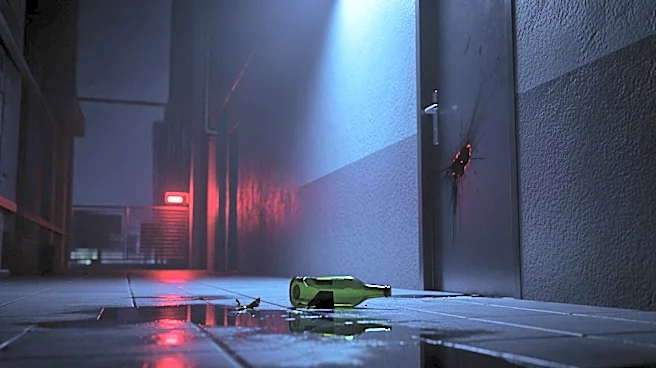

OpenAI CEO Sam Altman has responded to an attack on his home and a New Yorker article questioning his trustworthiness. On Friday morning, a Molotov cocktail was allegedly thrown at Altman's San Francisco residence, though no injuries were reported. A suspect

was later arrested at OpenAI headquarters, threatening to burn down the building. Altman linked the attack to an 'incendiary article' published days earlier, suggesting it heightened risks amid AI-related anxieties. The article, authored by Ronan Farrow and Andrew Marantz, portrayed Altman as having a 'relentless will to power' and raised concerns about his trustworthiness. Altman acknowledged past mistakes, including being conflict-averse, which he said caused issues for OpenAI. He emphasized the need for de-escalating rhetoric and sharing technology broadly.

Why It's Important?

The incident underscores the growing tensions surrounding AI development and its societal implications. As a prominent figure in AI, Altman's experiences highlight the personal risks faced by leaders in this field. The New Yorker article's portrayal of Altman raises questions about leadership ethics in tech, potentially influencing public perception and investor confidence in OpenAI. The attack and subsequent arrest may prompt discussions on security measures for tech executives. Altman's call for de-escalation and technology sharing reflects broader debates on AI governance and ethical development, impacting policy decisions and industry standards.

What's Next?

The arrest of the suspect may lead to legal proceedings, potentially revealing motives behind the attack. OpenAI and other tech companies might reassess security protocols for executives. The New Yorker article could spark further media scrutiny of Altman and OpenAI, influencing public discourse on AI ethics. Altman's response may prompt industry leaders to engage in dialogues about responsible AI development and collaboration. Stakeholders, including policymakers and civil society groups, may push for clearer regulations on AI safety and ethical standards.