What's Happening?

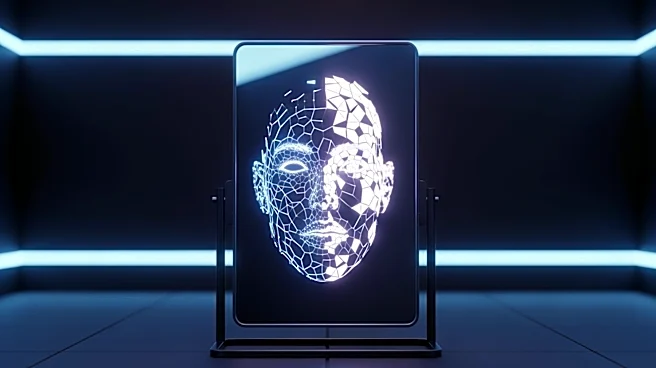

A study published in Nature introduced an AI model named 'Centaur,' designed to simulate human cognitive behavior using large language models. Initially, Centaur was praised for its performance across 160 tasks, including decision-making and executive

control. However, a recent study from Zhejiang University challenges these claims, suggesting that Centaur's success may be due to overfitting rather than genuine understanding. The researchers found that Centaur often relied on learned statistical patterns rather than comprehending the tasks, akin to a student memorizing test formats without understanding the material. This raises questions about the model's ability to truly simulate human cognition.

Why It's Important?

The findings underscore the complexities involved in evaluating AI systems, particularly those claiming to replicate human cognitive processes. The study highlights the potential pitfalls of over-reliance on statistical patterns in AI models, which can lead to misinterpretations and errors. This is crucial for developers and users of AI technologies, as it emphasizes the need for rigorous testing and validation to ensure that AI systems are genuinely capable of understanding and processing information as intended. The research also points to the broader challenge of achieving true language comprehension in AI, which is essential for developing systems that can effectively model human cognition.