What's Happening?

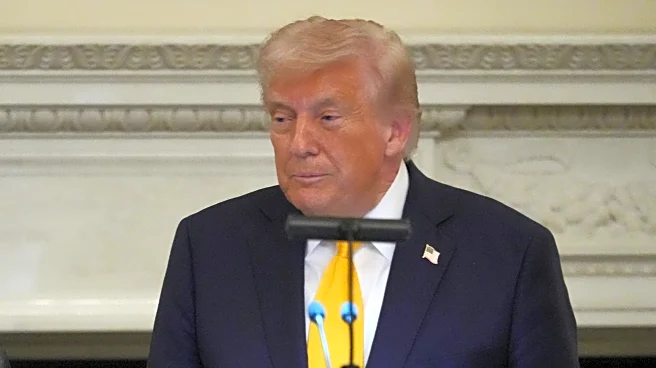

The Trump administration has introduced a legislative framework aimed at centralizing AI policy in the United States by preempting state laws. This framework seeks to establish a uniform national standard for AI regulation, potentially undermining state efforts

to regulate AI technology. The proposal emphasizes innovation and scaling AI, while placing significant responsibility on parents for child safety issues. It suggests that Congress should require AI companies to implement features to reduce risks to minors but lacks enforceable requirements. The framework also aims to prevent states from penalizing AI developers for third-party misconduct and does not include liability frameworks or independent oversight mechanisms.

Why It's Important?

The proposed framework could significantly impact the balance of power between federal and state governments in regulating AI. By centralizing AI policymaking in Washington, it may limit states' ability to act as early regulators of emerging AI risks. This approach is favored by many in the AI industry, as it provides a clear national standard and reduces the threat of regulation, potentially fostering innovation. However, critics argue that it could hinder states' ability to address specific local concerns and protect citizens from potential harms caused by AI. The framework's emphasis on parental responsibility for child safety may also shift the burden away from tech companies, raising concerns about accountability.

What's Next?

The Trump administration plans to work with Congress to turn this framework into legislation. The Commerce Department is expected to compile a list of state AI laws deemed onerous, which could affect states' eligibility for federal funds. The framework's implementation will likely face opposition from states that have already enacted AI regulations, as well as from advocacy groups concerned about the lack of accountability and oversight. The debate over the appropriate balance between federal and state regulation of AI is expected to continue, with potential legal challenges and legislative battles on the horizon.

Beyond the Headlines

The framework's focus on preventing government-driven censorship while allowing platform moderation raises questions about the definition of censorship versus standard content moderation. This could complicate efforts to address issues like misinformation and election interference. The framework's lack of clear liability frameworks and enforcement mechanisms may leave gaps in addressing novel harms caused by AI, potentially affecting public trust in AI technologies. The emphasis on protecting intellectual property rights and free speech highlights the ongoing tension between innovation and regulation in the AI space.