What's Happening?

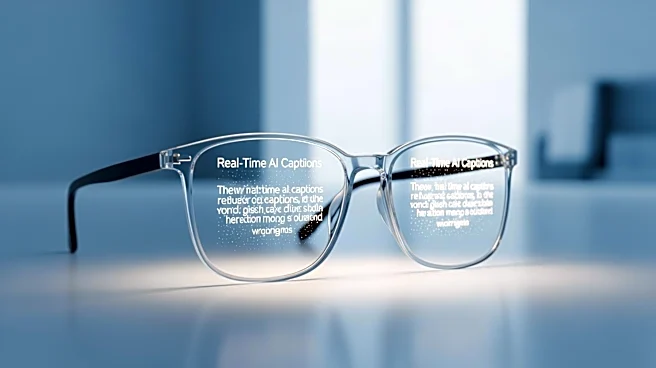

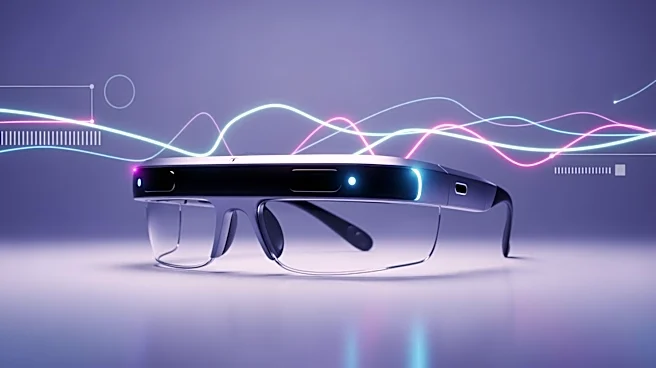

In May 2026, live-captioning glasses have entered the mainstream, offering real-time AI-generated captions for users. This development is significant as it marks a shift from experimental technology to practical application, with major companies like

Apple reportedly testing multiple smart-glasses designs. These glasses aim to improve accessibility in meetings and public events by providing captions that enhance communication for individuals with hearing impairments. However, challenges such as accuracy in noisy environments and privacy concerns remain.

Why It's Important?

The introduction of live-captioning glasses represents a major advancement in accessibility technology, potentially transforming how individuals with hearing impairments engage in social and professional settings. By providing real-time captions, these glasses can facilitate better communication and inclusivity. The technology also raises important questions about privacy and consent, as the use of recording devices in public spaces may require new policies and regulations. The success of these glasses could drive further innovation in augmented reality and accessibility solutions.

What's Next?

As live-captioning glasses become more widely available, stakeholders such as employers, venues, and regulatory bodies will need to address privacy and consent issues. The technology's adoption may lead to new standards and guidelines for the use of recording devices in public and private settings. Additionally, ongoing improvements in AI and hardware design are expected to enhance the accuracy and reliability of captions, making the technology more appealing to a broader audience.