What's Happening?

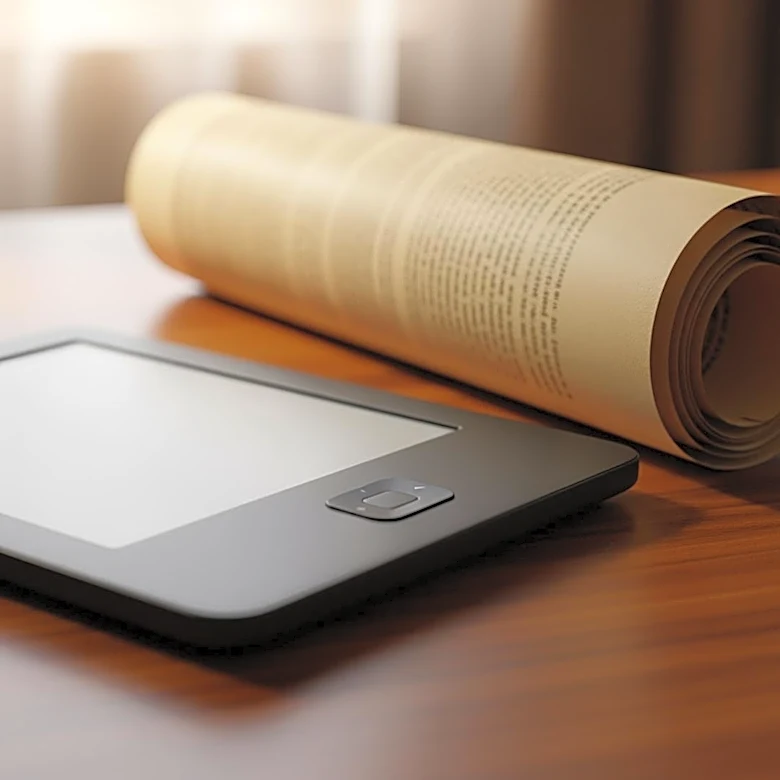

A new trend in AI development involves creating large language models (LLMs) based on historical data, with a recent example being an AI trained exclusively on information available before 1931. This AI, named 'talkie-1930-13b-base', was developed using

260 billion tokens of pre-1931 English text, including books, newspapers, and scientific journals. The aim is to explore how AI can function when trained on data from a specific historical period, free from modern influences. However, challenges such as data impurities and potential anachronistic influences during training are noted.

Why It's Important?

This development highlights the potential for AI to serve as a tool for historical analysis, offering insights into past societal contexts and possibly predicting future trends based on historical data. By understanding how AI interprets and processes historical information, researchers can gain a better understanding of the evolution of language and ideas over time. This approach also raises questions about the accuracy and limitations of AI models trained on historical data, which could impact their use in educational and research settings.

Beyond the Headlines

The creation of AI models based on historical data prompts discussions about the ethical implications of using AI to interpret and potentially rewrite historical narratives. It also raises questions about the role of AI in preserving cultural heritage and the challenges of ensuring accuracy when dealing with historical data. As AI continues to evolve, its application in historical research could lead to new methodologies and insights, but it also requires careful consideration of the potential biases and limitations inherent in the data used.