What's Happening?

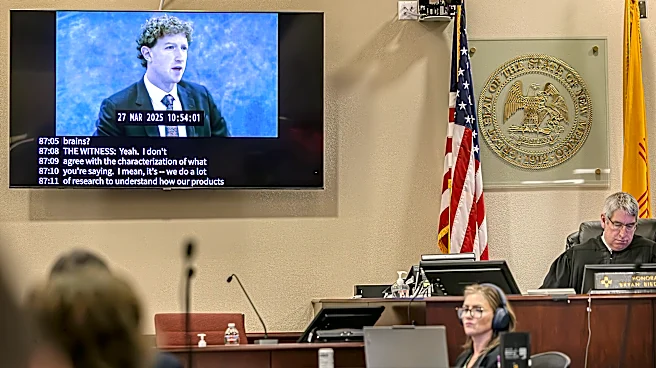

Meta, the company behind Facebook and Instagram, has been found in preliminary violation of the European Union’s Digital Services Act (DSA) for failing to prevent children under 13 from accessing its platforms. The European Commission's investigation,

initiated in May 2024, revealed that Meta's measures to restrict underage users are insufficient. Despite having terms and conditions that require users to be at least 13 years old, many underage users reportedly bypass these rules by using fake birthdates. Additionally, Meta's tools for reporting underage accounts were criticized for being complex and ineffective. This breach could potentially lead to fines amounting to 6% of Meta's global annual turnover. The commission's findings come amid broader concerns about the mental and physical wellbeing of young users on social media and the potential addictive impacts of Meta's platforms. Countries like Spain and France are considering or have already implemented stricter age restrictions for social media access.

Why It's Important?

The European Commission's findings against Meta highlight significant concerns regarding child safety on social media platforms. This development underscores the growing scrutiny of tech companies' responsibilities in protecting young users from potential harm. The potential fines, which could reach 6% of Meta's global annual turnover, represent a substantial financial risk for the company and could prompt other tech giants to reassess their age verification and child protection measures. The issue also raises broader questions about the effectiveness of current regulations and the need for more robust enforcement mechanisms. As countries like Spain and France move towards stricter age restrictions, this case could set a precedent for future regulatory actions in the tech industry, influencing how companies manage user safety and compliance with international laws.

What's Next?

Meta may face significant financial penalties if found in violation of the EU's Digital Services Act. The company will likely need to enhance its age verification processes and simplify its tools for reporting underage accounts to comply with EU regulations. This situation may prompt Meta to engage in discussions with European regulators to negotiate potential penalties and explore ways to improve its compliance measures. Other tech companies might also take proactive steps to review their policies and avoid similar regulatory challenges. The broader industry could see increased pressure to implement more effective child protection measures, potentially leading to new standards and practices for age verification and user safety.

Beyond the Headlines

The case against Meta highlights ethical concerns about the role of social media platforms in safeguarding young users. It raises questions about the balance between user growth and safety, as companies may prioritize expanding their user base over implementing stringent age restrictions. The situation also reflects cultural shifts in how societies view the responsibilities of tech companies in protecting vulnerable populations. As awareness of the potential harms of social media on young users grows, there may be increased advocacy for more comprehensive regulations and industry standards. This could lead to long-term changes in how social media platforms operate and interact with their users, emphasizing safety and ethical considerations.